Recently, in a conversation with some colleagues, a question arose that is quite common in the world of agility. How can we measure the efficiency of our team?

Very quickly, the conversation turned toward knowledge of the team’s velocity. At that moment I commented:

But efficiency is not just a matter of speed, is it? And before answering, we should really understand what it means for a team to be “efficient”.

Effectiveness is directly related to achieving the established objectives. An action is effective when it achieves the expected result, regardless of how the available resources have been used.

In the business context, effectiveness focuses on the “what”. It involves meeting objectives, achieving commercial goals, delivering committed functionalities, or reaching specific business milestones. It is a clear and easy-to-evaluate criterion, as it is directly linked to observable and measurable results.

This approach is useful for aligning teams around concrete objectives and focusing them on a clear goal. However, focusing exclusively on effectiveness can obscure relevant aspects such as the sustainability of the effort, the use of talent, or the rational use of resources.

In traditional organizational environments, it is common to assume that if objectives are achieved, the system works. But this view can hide structural inefficiencies, hidden costs, or competition for resource allocation that can lead to serious problems in the medium term.

Effectiveness therefore focuses on maximizing results, using the appropriate resources to do so, and ultimately prioritizing results over the optimization of the resources used.

Efficiency has to do with the appropriate use of available resources. In projects, the first limitation (or the most obvious one) is cost. Someone decides that a given product has a cost ceiling—whether through a tender, a commercial offer, or any other type of prior calculation. But it is also common to face:

When we talk about constraints, however, we are not referring to working under scarcity, but rather to paying attention to the relationship between cost and outcome. Even if you have unlimited resources, efficiency means using what is truly optimal for the value we aim to achieve.

Efficiency consists of being aware of the tools we have at our disposal and using them in the best possible way to obtain the desired results.

In complex and uncertain environments, typical of modern product development—where agile frameworks and good practices are especially well suited—achieving objectives does not always imply generating real value. Often, in order to ensure product evolution, aspects such as learning, the value obtained, continuous feedback, or uncertainty reduction have a greater impact on the product’s future than the delivery of functionalities.

In agile product development contexts, both effectiveness and efficiency only make sense if they are related to the value generated.

In product development, it is not enough to achieve objectives if this is done at an excessive cost or with a negative impact on team sustainability. Nor is it sufficient to optimize resources if the result does not generate useful value for users.

Are we looking for fast teams? Or are we looking for teams that work in an environment where real and sustainable impact can be generated?

Effectiveness integrates the best of effectiveness and efficiency.

In agile environments, effectiveness is expressed when teams are able to deliver value sustainably, with quality, continuous learning, and adaptability. The real goal of teams is not to be efficient or effective, but to be effective.

In product and project management, it is common to use delivery velocity as a basic performance indicator. However, speed alone does not describe a team’s effectiveness or the quality of the working system.

A team may deliver very quickly and, at the same time, generate rework, technical debt, or low-value functionalities. Likewise, a team may move at a moderate but stable pace, delivering high-quality increments with real impact for users.

For this reason, velocity should be interpreted as an indicator of capacity (or work pace), not as a direct measure of effectiveness. To obtain a more complete view of a team’s performance, it is advisable to incorporate other relevant parameters, such as:

These elements allow effectiveness to be evaluated with a broader scope and aligned with the principles of continuous improvement, learning, and sustainability inherent to agile environments. All of these indicators, which we will discuss next, help us understand not only how much is delivered, but how healthy the working system is.

In this article we will not cover all the dimensions introduced here. You can find all the defined metrics in the following article: Effective teams. How to evaluate effectiveness beyond speed.

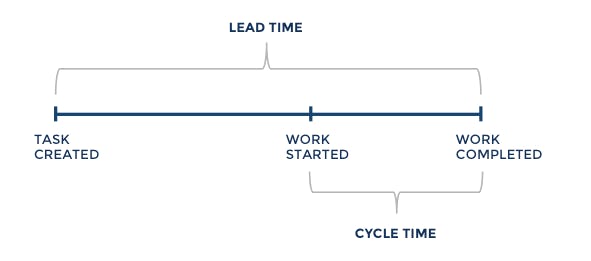

When analyzing a team’s effectiveness, the focus is often placed on technical build time (cycle time). However, limiting the analysis to this phase alone offers a partial view of the problem. There is much more that happens before construction that will determine the success or failure of our delivery commitments.

The cycle time measures the time that elapses from when a task actively starts until it is completed. It is a useful indicator for understanding development capacity and the flow of technical work.

Before a need reaches the construction phase, it typically goes through discovery, definition, validation, and prioritization processes. These processes also consume time and resources, and directly influence the final value we will be able to deliver.

The lead time measures the total time from when a need is identified or formulated until it is delivered. This indicator allows us to analyze the work system as a whole, beyond the technical phase and beyond the development team.

A high lead time can delay feedback, increase uncertainty, and raise the risk of misalignment with real user or business needs.

For this reason, optimizing workflow must consider the entire process, from the initial idea to delivery. Improving only the construction phase may result in technical improvements, but not necessarily in better quality outcomes, value, or suitability.

Problem description

Let’s imagine a functionality that requires two weeks to build, but spends two months in validation and prioritization before being started.

What does this tell us?

The problem is not the team’s technical capacity, but the time the need spends waiting within the system.

Possible impacts if not addressed

This can trigger significant negative effects for the project, such as:

Possible solution

Make the situation visible. Study less hierarchical validation mechanisms. Apply work boards for preparing these tasks to make visible the status of this and other tasks immersed in this process. Limit the tasks that are in validation (apply a WIP limit).

The quality of the result is a key factor in a team’s real effectiveness. Delivering quickly but with defects, technical shortcomings, or unvalidated product will generate additional work, increased costs, and loss of trust.

The Definition of Done (DoD) establishes the criteria that an increment must meet to be considered complete and usable by users. It is an explicit agreement that guarantees a minimum level of quality shared by the team and the organization. Some of these criteria are:

The DoD helps prevent the delivery of increments from accumulating technical debt or degradation that affects the overall product quality. In this way, it enables the team to work in a stable manner, considering aspects that go beyond the mere construction of new functionalities.

For this reason, consistent compliance with the DoD is also a relevant indicator of effectiveness. A team that delivers increments that meet the DoD reduces refactoring needs, improves product reliability, and facilitates decision-making based on truly finished and useful increments. The DoD also makes explicit what it means for work to be truly done, facilitating transparency between the team and the organization. This clarity helps align expectations and understand the level of quality required to generate value sustainably.

Problem description

A team is under pressure regarding its Cycle Time and, to go faster, decides to skip certain tests that traditionally require a lot of time and effort, such as regression tests.

The organization immediately perceives the increase in speed. Everything suggests the team has significantly improved efficiency. But is it effective?

After some time, an important feature is deployed and causes a critical and unexpected error.

What does this tell us?

The apparent initial time savings end up turning into technical debt and serious product stability issues.

Possible impacts if not addressed

The apparent initial time savings end up turning into technical debt and serious product stability issues.

Possible solution

The DoD is a fundamental piece of the framework. It is a safeguard for a team focused on quality, not just speed. We must explain the importance of this tool for team effectiveness—not only within the team but across the organization. This is an example of apparent efficiency. What we seek is real effectiveness.

In iterative environments, each product increment should deliver some kind of value to its recipients. This value is not always product increments; it can be learning, hypothesis validation, or uncertainty reduction.

When increments are neither used nor validated, one of the main strengths of iterative cycles is lost: the ability to receive continuous feedback. Without this feedback, the risk of building solutions that do not meet real user needs increases.

For this reason, it is useful to distinguish between output (what is built) and outcome (the impact it generates). A team may have a lot of output and yet little real impact.

It is not just about delivering product per se, but about validating whether the chosen direction in product evolution generates real value. A team may be very operationally effective and yet be building the wrong product. When evaluating a team’s efficiency, it can be useful to observe indicators related to the value obtained, such as:

These indicators help determine whether the effort invested translates into truly useful value, rather than just delivery volume.

Problem description

A team can deliver many new features, but they do not align well with what users need now. Users keep requesting other needs that are not delivered.

What does this tell us?

The team is generating outputs, which may look efficient. But those outputs are not necessarily outcomes. The problem is not capability, but alignment with real value.

Possible impacts if not addressed

This can trigger significant negative effects, such as:

Possible solution

As Scrum Masters, we should have a value-focused conversation with the Product Owner to raise awareness of the importance of a well-prioritized Product Backlog.

Com hem vist al principi de l’article, to obtain a more complete view of a team’s performance, it is advisable to incorporate other relevant parameters, such as:

Pots trobar informació en detall de les mètriques de les que no hem parlat aquí a l’enllaç següent: Equips efectius. Com avaluar l’efectivitat més enllà de la velocitat.

Measuring effectiveness makes sense when it helps us make better decisions. Measurement should not become an end in itself. Goodhart’s Law: “When a measure becomes a target, it ceases to be a good measure.” Metrics, evaluations, and tools are means to achieve cohesive teams, more valuable communication, and better products.

Working with these metrics aims to initiate conversations in the active pursuit of improvement, not merely to collect information to feed a dashboard or audit teams. Let’s turn this information into catalysts that foster collaboration and mutual understanding between teams and the organization.

For example, if we look at the difference between Lead Time and Cycle Time: we have already seen in the practical example that a short Cycle Time cannot guarantee real and useful value for users if it suffers from an excessive Lead Time. Once this issue is identified, we can track the evolution of these two indicators over time to assess whether our conversations and proposed solutions are effective.

We do not even need a technological tool or a dashboard to do this tracking. Note the date when some important functionalities were requested, the date when they started being built, and the date when they were delivered. Do this for a period of time and observe whether waiting time decreases or not—that is, whether Lead Time moves closer to Cycle Time.

In five minutes and with post-its (or a small spreadsheet), you can have a powerful tool at hand. And it will be a tool that seeks improvement not only in the technical environment, but across the entire organization.

How can we involve the organization in this insight? The Retrospective meeting could be the ideal space to raise a question: “We’ve discovered that Lead Time is four times longer than Cycle Time. What can we do to reduce the waiting time for the most critical functionalities?”

Another example: when we have discussed the quality of the Product Backlog and the need to ensure proper refinement, we must ensure that this does not remain just a discourse. We could propose, for example, that during Sprint Planning, before starting, we ask the team to rate (for example from 1 to 5) the quality of the backlog information for each of the items to be addressed in the planning. This gives us information (subjective but useful) about whether our refinement efforts are on the right track or not.

Ultimately, an effective team is one that generates a positive impact on the product in a sustainable way over time. This is, at its core, the objective of agility. A good product is built by good teams; and good teams can exist where value orientation and sustainability matter more than speed.